From API call to autonomous action: how agents consume demographic intelligence

Published 10 April 2026 · 9 min read

The conversation about AI agents and data quality often stays at the conceptual level — data needs to be fresh, structured, trustworthy. All true. But for the teams actually building agentic applications, the practical question is more specific: what does the interaction between an agent and a data source actually look like at runtime? What happens between the moment an agent decides it needs demographic context and the moment it takes an action based on what it receives?

This piece walks through that interaction in concrete terms, using Cogstrata's API as the reference implementation. The goal is not to sell a product — it is to illustrate the data architecture patterns that make the difference between an agent that makes reliable decisions and one that produces plausible-sounding outputs built on uncertain foundations.

The query: what an agent asks for

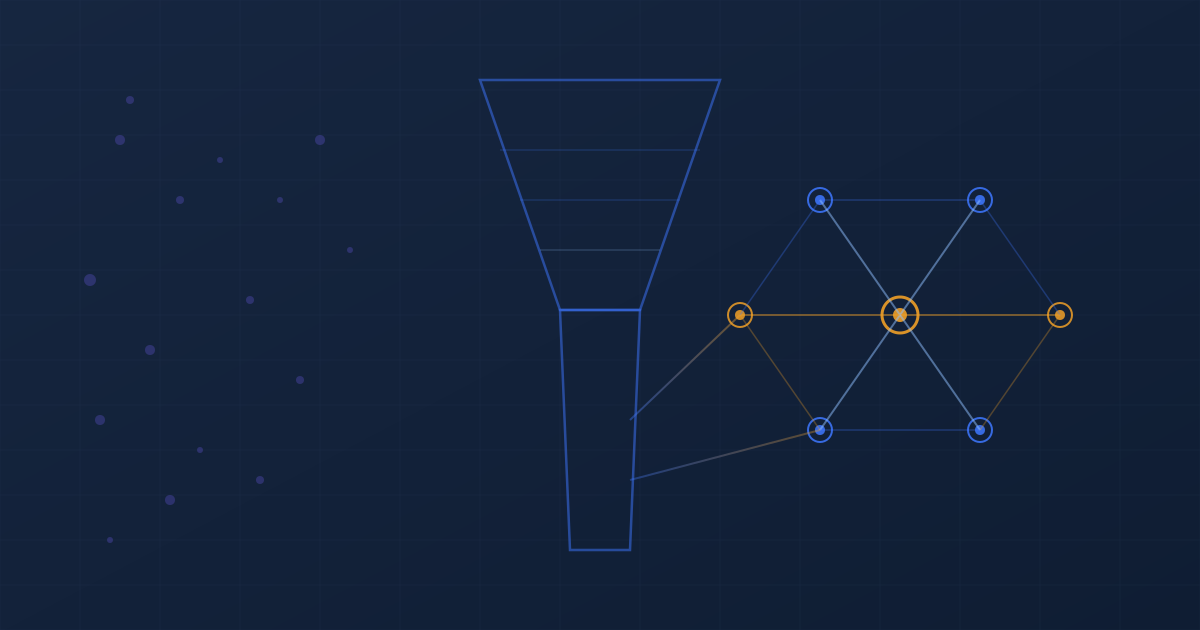

In most agentic architectures, the agent does not know in advance what data it will need. It encounters a decision point — a customer record to evaluate, a segment to target, a risk threshold to assess — and determines at that moment what contextual information would improve its decision. This is fundamentally different from a batch analytics workflow, where the entire dataset is ingested upfront and the analyst explores it at leisure.

When an agent queries a demographic data source, the query is typically narrow and purpose-driven. It might be: given this postcode, what is the current financial stress profile? Or: for this set of 500 postcodes, which ones have experienced significant demographic change in the past 90 days? The agent is not browsing — it is seeking specific signals to resolve a specific decision.

This query pattern has implications for how the data must be organised. The data source needs to support point lookups with low latency, attribute-level filtering so the agent can request only what it needs, and temporal queries that allow the agent to ask not just for current values but for change over time. A data source optimised for batch export — the standard delivery model for traditional providers — handles these query patterns poorly, because it was designed for a different consumption model.

The response: what the agent receives

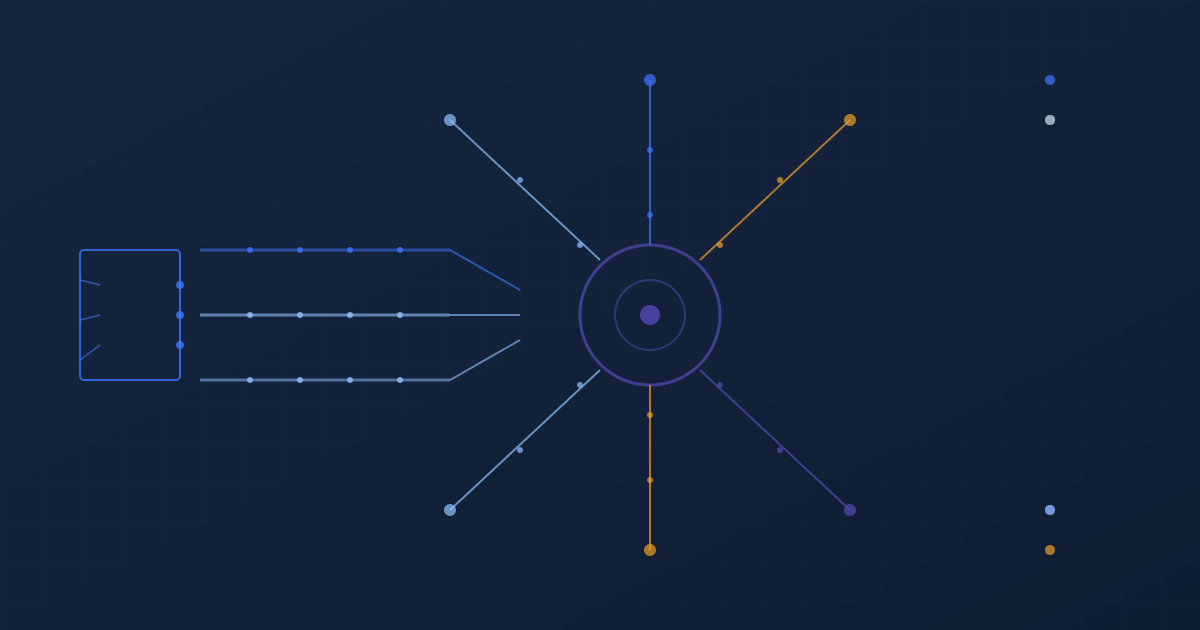

What the agent receives in response to its query is where the real differentiation happens. A traditional data product returns values — flat key-value pairs mapping attribute names to numbers or categories. An agent-ready data source returns values wrapped in a metadata envelope that gives the agent the information it needs to use those values responsibly.

Consider a response that includes a financial stress score for a given postcode. In a flat model, the agent receives something like: financial_stress_score: 7.3. That is all it has to work with. In a meta-rich model, the agent receives the score alongside its last update timestamp, the confidence band, the contributing data sources, the magnitude of change since the previous computation, and the semantic type indicating this is a composite derived score rather than a direct observation.

The difference this makes to agent behaviour is substantial. An agent that receives a bare score of 7.3 can only compare it numerically against a threshold. An agent that receives the full metadata envelope can make conditional decisions: if the score is high-confidence and recently updated, act on it directly; if the confidence is low, seek corroborating signals before proceeding; if the score has changed significantly since the last query, flag the change for review rather than treating the new value as stable.

Try the API on your own data

Send us a set of postcodes and we'll return the full meta-rich response — values, confidence bands, provenance, and freshness metadata — so you can see exactly what your agents would receive.

The interpretation layer: how agents process structured attributes

Between receiving the data and taking action, the agent must interpret what it has received. This is where the semantic structure of the data product becomes critical. An agent operating on well-structured demographic data can build interpretation logic that is generalised and maintainable. An agent working with poorly structured data must build bespoke interpretation for every attribute, every source, and every edge case.

Semantic typing is the foundation. When every attribute carries a type tag — observation, estimate, classification, composite — the agent can apply consistent interpretation rules. Observations are treated as facts (within their temporal window). Estimates are treated as probabilistic. Classifications are treated as categorical assignments that may have boundary cases. Composites are treated as derived signals whose meaning depends on their component parts. This type system allows the agent to handle hundreds of attributes with a consistent framework rather than hand-coding interpretation logic for each one.

Confidence scoring enables risk calibration. An agent that knows a given attribute has a confidence score of 0.92 can treat it differently from one scored at 0.61. The agent does not need to understand why the confidence differs — it just needs the signal to adjust its behaviour. For a credit risk agent, this might mean the difference between an automated decision and a referral to a human reviewer. For a marketing agent, it might mean the difference between a targeted offer and a generic message.

Provenance enables attribution and audit. In regulated industries — financial services, insurance, healthcare — the ability to trace a decision back to its data inputs is not optional. When an agent makes an underwriting decision based on demographic intelligence, the provenance metadata attached to each attribute provides the audit trail. Which data sources contributed? When were they last refreshed? What was the confidence at the time of the decision? This is not just good practice — in the context of FCA Consumer Duty and similar regulatory frameworks, it is increasingly a requirement.

The action: from data to decision

The final step in the agent's workflow is the action itself — the decision that is taken, the recommendation that is made, the process that is triggered. What makes agent-ready data distinctive at this stage is that the metadata layer allows the agent to modulate its actions based on data quality, not just data values.

An agent with access to meta-rich data can implement graduated response patterns. High-confidence, recently updated data triggers direct action — an automated credit decision, a personalised offer, a risk flag. Moderate-confidence data triggers conditional action — proceed but request confirmation, or apply a wider tolerance band. Low-confidence data triggers caution — defer the decision, request additional signals, or route to a human reviewer. These graduated patterns produce agent behaviour that is both more commercially effective and more defensible under regulatory scrutiny.

This is the practical payoff of investing in data architecture for agentic consumption. The agent does not just make decisions — it makes decisions whose quality tracks the quality of the evidence supporting them. It does not overreach when the data is thin. It does not hold back when the data is strong. It calibrates, continuously, based on signals that are embedded in the data itself.

For teams building agentic applications, this is the standard to evaluate data sources against. Not just: is the data accurate? But: does the data give my agent the information it needs to know how accurate it is, how current it is, and how much confidence to place in it? The data sources that answer yes to these questions are the ones that will power the next generation of autonomous decision-making. The ones that do not will produce agents that are impressively fluent and systematically unreliable.

This is part four of our Agent-Ready Data series

Exploring why demographic intelligence built for autonomous AI agents requires a fundamentally different approach to data architecture, curation, and delivery.

Request a Free Sample