From batch to always-on: the architecture of modern customer intelligence

Published 12 March 2026 · 9 min read

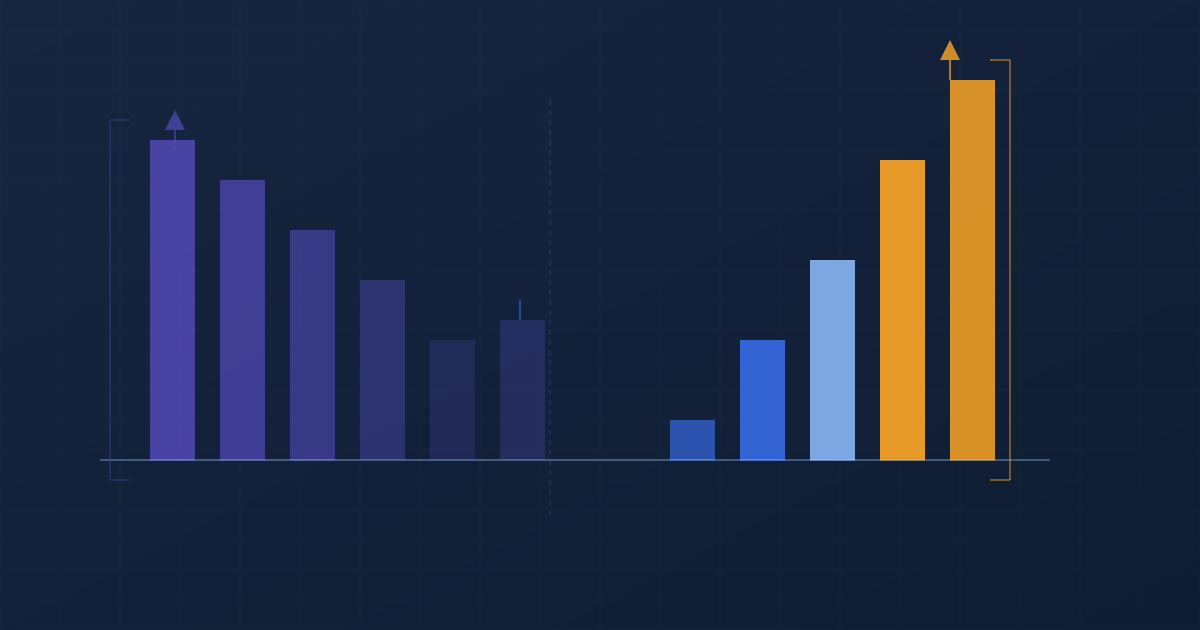

The dominant model for third-party demographic data has always been batch. A data provider collects, processes, and compiles a dataset. The dataset is licensed to clients, typically on an annual basis. The client's data team ingests the dataset, appends it to their customer records, and builds analytical models on top of it. A year later, the cycle repeats with a new batch refresh. This model has served the market for decades, and its limitations have been well understood and largely accepted as intrinsic to the category.

They are not intrinsic. They are a product of the technical and economic constraints that existed when the batch model was designed. Those constraints have changed materially in the past five years. The question now is not whether always-on data processing is technically possible — it demonstrably is — but whether the commercial and analytical case for it has been made clearly enough to drive adoption. This piece is an attempt to make that case.

Why batch was the only viable model

The batch model emerged from real constraints. Collecting and processing large volumes of geographic and demographic data — census records, electoral roll data, land registry transactions, survey responses — required significant computational infrastructure. Storage was expensive. Processing was slow. The economics of running continuous data processing pipelines against dozens of input data streams simply did not work at the price points that commercial data products needed to hit to find a market.

The legacy providers — CACI, Experian, and others — built their products around these constraints and have maintained them even as the underlying economics have changed. This is a rational response to the institutional dynamics of large organisations: existing customers are integrated around the annual batch model, pricing structures are built around it, account relationships are structured around it. The switching costs of moving to a different data architecture are real, and the revenue model for continuous refresh is less established.

But the constraints themselves are gone. Cloud computing has made continuous large-scale data processing economically viable at the price points required for a commercial data product. Machine learning models that process multiple input signals and produce stable, interpretable output attributes can run continuously against streaming data inputs. The fundamental architecture of always-on demographic intelligence is not a future possibility — it is a present reality.

What the Cogstrata pipeline actually looks like

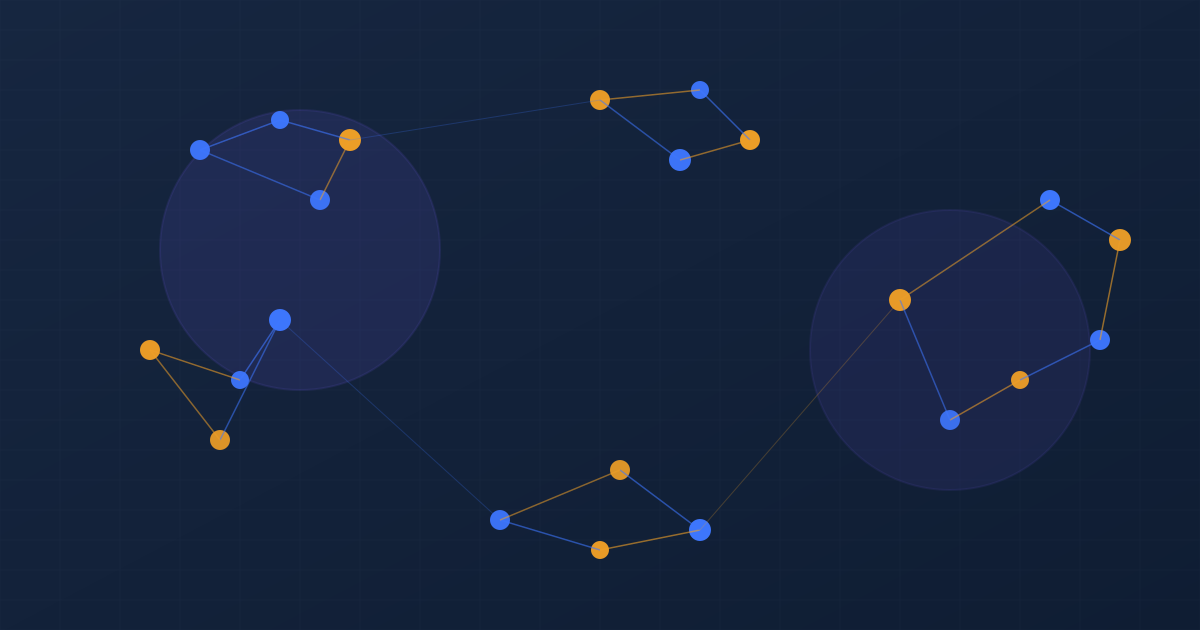

Cogstrata's data processing architecture is built around continuous ingestion of real-world event streams. The primary input sources include Land Registry transaction data (updated as transactions complete), EPC certificate issuance (updated as certificates are registered), insolvency filing data from the Insolvency Service, claimant count data from HMRC, retail opening and closure data from planning authorities and commercial property feeds, and macroeconomic indicators including the BoE base rate, regional CPI indices, fuel and energy price benchmarks, and employment statistics.

Each of these inputs carries a different update frequency. Land Registry data updates on a rolling basis as transactions complete — the lag between transaction and data availability has reduced significantly in recent years. HMRC claimant data publishes monthly. EPC data registers continuously. Macroeconomic indicators publish on various schedules — some daily, some monthly — and are processed as they become available.

The processing layer applies eight proprietary inference models to these inputs at the postcode level, producing derived attributes that combine multiple signals into commercially interpretable scores. The Mortgage Pressure Delta, for example, combines Land Registry transaction data (to estimate the likely mortgage vintage and size for a given postcode), Bank of England rate data (to model the current monthly payment burden under prevailing rates), and HMRC claimant data (to adjust for local employment conditions). The result is a continuously updated score that reflects current financial pressure at the postcode level — not a proxy derived from a historical survey.

See the pipeline in action

Send us a sample of your postcodes and we'll show you what always-on demographic intelligence looks like on your actual customer base.

What changes when the data is always on

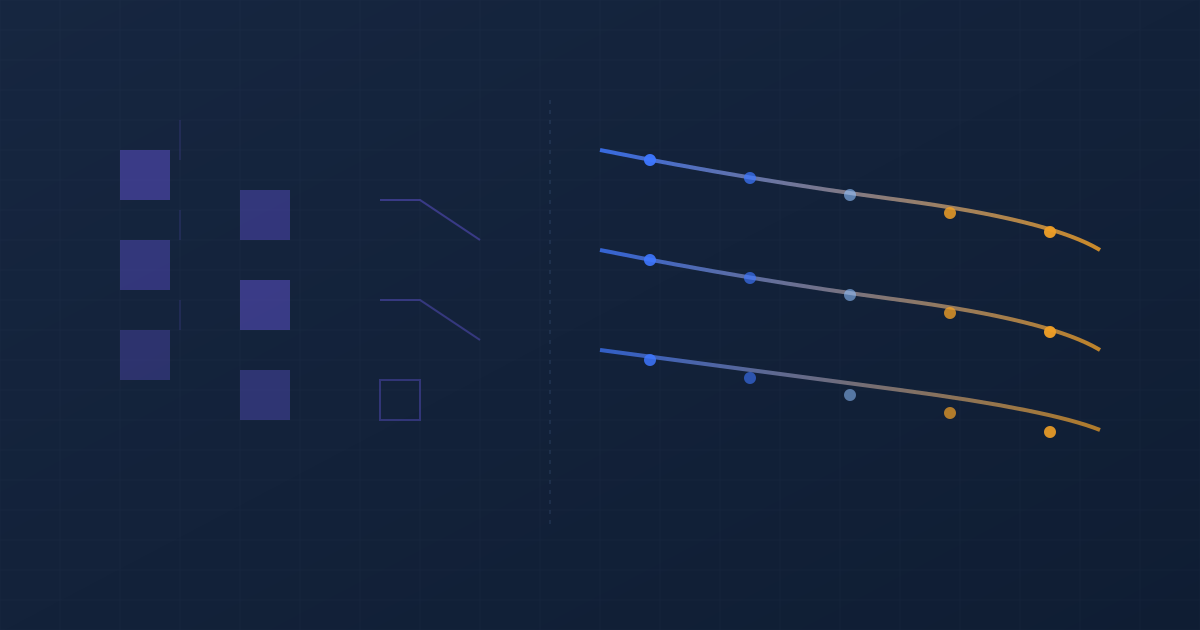

The most important change that continuous refresh enables is not that any individual attribute is more accurate at a point in time — though it is — but that the relationship between a data attribute and a business event can be monitored continuously rather than measured retrospectively. This makes possible a category of analytical application that the batch model cannot support.

Consider churn prediction in a utilities context. A batch model enriched with annual demographic data can tell you which customer segments have historically churned at higher rates. It cannot tell you that the financial stress indicators in a specific postcode cluster have risen materially in the past 60 days — a change that, in historical data, tends to precede switching behaviour by 90 to 120 days. That leading indicator signal is only accessible with continuous data refresh. In a batch model, you see it retrospectively — after the churn has already happened.

The same logic applies to credit risk. A Mortgage Pressure Delta score that updates as the Bank of England rate changes allows a lender to identify, in near real time, which postcodes have experienced the sharpest increase in estimated mortgage burden following a rate decision. A batch model would identify this shift at the next annual refresh — by which time the period of maximum risk elevation may have passed, or arrears may already have materialised.

For marketing applications, continuous refresh enables something closer to environmental targeting — directing spend at customers who are, right now, in the conditions that historically precede purchase intent, rather than at customers who were in those conditions when the data was last updated.

Integration with existing stacks

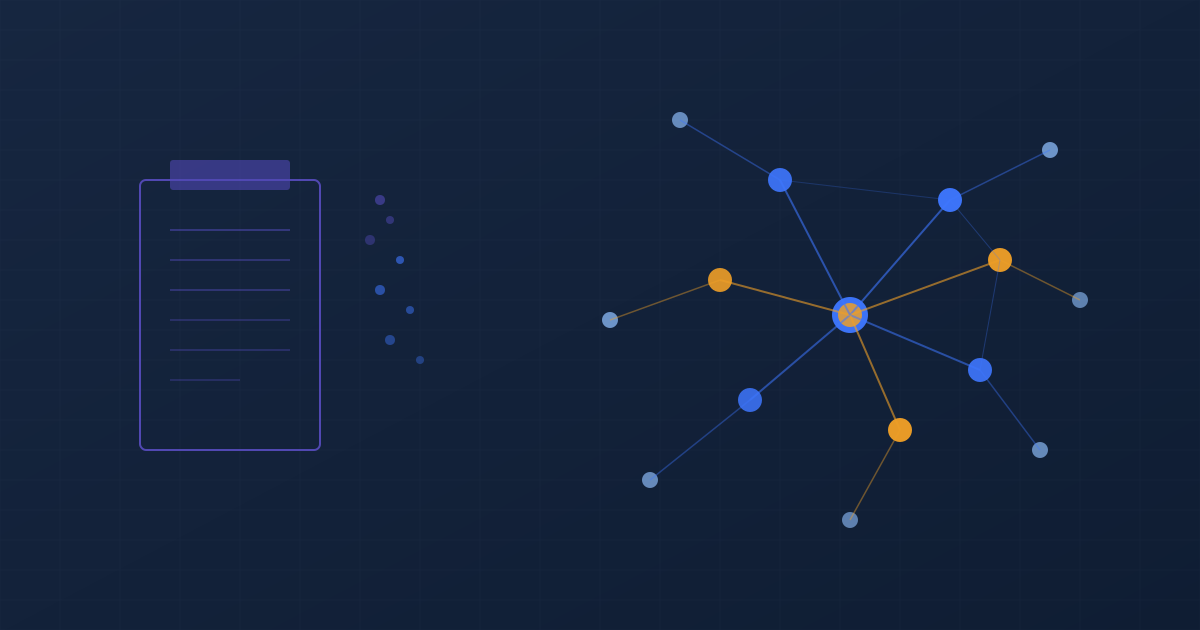

The common concern about always-on data is integration complexity. If the underlying data is changing continuously, how does it integrate with analytical models and CRM systems that are themselves built around periodic batch processes?

The answer, in practice, is that most commercial analytical applications do not require truly continuous data. What they require is data that is current within a commercially relevant time window — typically days or weeks rather than months or years. Cogstrata's delivery model allows clients to consume updated attributes on whatever schedule fits their operational process: daily via API for applications that run on a daily decision cycle, weekly for applications with longer action windows, or as a live data share for clients operating directly in Snowflake, BigQuery, or AWS.

The transition from batch to always-on does not require rebuilding the analytical stack. It requires updating the data pipeline layer that feeds the existing stack — replacing the annual batch import with a more frequent refresh from a Cogstrata data share. In most cases, the models built on the existing data continue to function; they simply produce better outputs because the data they are working with is more current.

The batch model was the best available architecture for its time. The constraints that produced it no longer exist. The question for every analytics team operating from annual or biennial demographic data is how much commercial value is being left on the table by the architecture they inherited from an earlier era — and how much of that value they could recover with a change that is, in practice, considerably simpler to make than it appears.

See always-on data in action on your own customer base

Cogstrata integrates directly with Snowflake, BigQuery, and AWS — or delivers via API and batch export. Free sample enrichment, results in 24 hours.

Request a Free Sample