The true cost of stale data: a CFO's guide to demographic intelligence ROI

Published 26 February 2026 · 8 min read

Data quality conversations in organisations typically focus on completeness, accuracy, and consistency. Freshness — the degree to which data reflects current rather than historical reality — rarely receives the same attention, even though it may be the dimension with the largest commercial consequence. This is particularly true for demographic and socioeconomic data, where the gap between the world a dataset describes and the world a business is actually operating in can represent significant and quantifiable financial loss.

This piece is an attempt to put structure around what that cost actually looks like in practice. Not in abstract terms — "your data might be outdated" — but in the concrete terms that matter to a CFO or a chief analytics officer trying to justify investment in better intelligence infrastructure.

The three cost categories

The financial cost of demographic data lag manifests primarily in three areas: campaign targeting inefficiency, credit risk mispricing, and retention failure. Each has a different signature and a different order of magnitude depending on the size and sector of the business.

Campaign targeting inefficiency is the most visible. Marketing teams allocate spend across customer segments based on predicted response rates, which are in turn driven partly by demographic proxies — income band, household type, financial resilience. When those proxies are outdated, spend is misallocated. A segment that was historically high-value for a given product may have experienced sufficient financial stress to reduce its conversion rate materially. The misallocation may be modest per campaign — a few percentage points of budget directed at segments with lower actual conversion than predicted — but cumulative across a year of campaigns, it represents a significant deadweight loss.

For a mid-market retail business spending £10 million annually on direct and digital marketing, a 5% targeting efficiency loss driven by demographic data lag represents £500,000 in wasted spend per year. That is a conservative estimate; conversations with marketing analytics teams suggest the actual figure is often higher, particularly in categories with significant cost-of-living sensitivity.

Credit risk mispricing is more consequential in absolute terms but less visible in attribution. When a lender uses demographic classification as a component of credit risk assessment, they are implicitly assuming that the relationship between demographic type and financial behaviour has remained stable since the classification was built. In a period of significant macroeconomic disruption — as the UK has experienced since 2021 — that assumption fails.

The failure mode is systematic underestimation of risk in segments that have experienced disproportionate financial stress. A Mosaic type or Acorn category that historically correlated with low arrears rates may now contain a meaningful proportion of households under mortgage pressure, with compressed discretionary income and elevated susceptibility to payment difficulty. Pricing credit risk based on the pre-stress profile means extending credit at rates that do not adequately reflect current conditions. The cost shows up in higher-than-projected default rates — often attributed to macroeconomic conditions in aggregate, without attribution to the demographic data quality gap that contributed to the mispricing.

Retention failure sits between the two. Churn prediction models that rely on demographic classification to identify at-risk customers will systematically misidentify the segments where churn risk has risen most sharply since the classification was built. Customers who fell into "loyal" demographic types based on historical survey data may now be under financial pressure that makes them highly price-sensitive and actively open to switching. The retention campaign never reaches them because the model does not flag them as at risk. The customer churns. The cost is the full lifetime value of the lost relationship — which, in financial services, can run to tens of thousands of pounds per customer.

Put a number on your data gap

We'll run a segmentation drift analysis on your postcodes so you can see the commercial impact of stale demographics.

The benchmark question

The challenge in making the ROI case for fresher demographic data is that the counterfactual is difficult to observe. You cannot easily measure the campaigns you should have suppressed, the credit you should have priced differently, or the customers you should have retained, because you did not run the alternative experiment. The cost of data lag is structurally hidden in aggregate performance metrics that attribute variance to macroeconomic conditions rather than data quality.

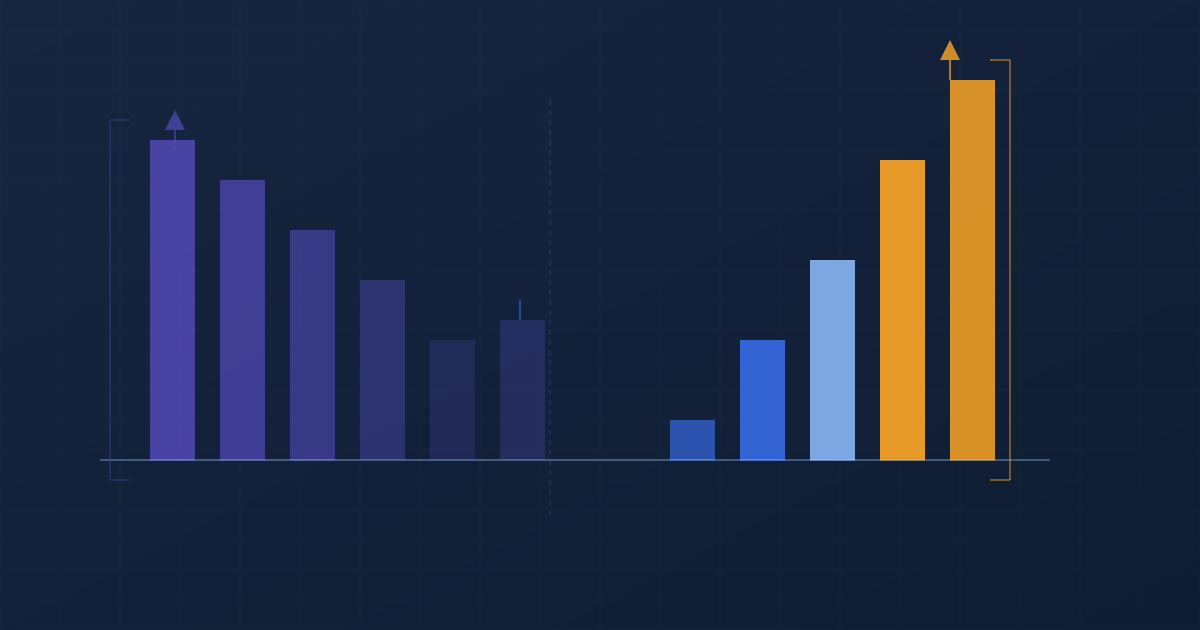

The most effective approach we have seen for quantifying the gap is a segmentation drift analysis: taking a sample of the current customer base, enriching it with continuously-refreshed demographic attributes, and comparing the resulting profile against the existing classification-derived segmentation. The divergence between the two — how many customers have meaningfully different predicted financial behaviour under the current-data view versus the legacy-classification view — provides a direct proxy for the targeting and risk mispricing exposure.

In most cases, the segmentation drift analysis reveals that between 15% and 30% of the customer base would be classified differently — or would receive different risk scores, different retention priority rankings, or different campaign targeting decisions — under a continuously-refreshed data model. The financial value of correcting those misclassifications is the starting point for an ROI conversation about data infrastructure investment.

What the investment looks like

Cogstrata's pricing model is designed to make the ROI calculation straightforward. Access to the full 500+ attribute set starts at £99 per month for API-based access at lower volumes, scaling to enterprise data share arrangements for organisations integrating directly into Snowflake, AWS, or BigQuery at scale. There is no requirement to rip out existing data infrastructure. Cogstrata augments what is already there.

The practical starting point for most organisations is a free sample enrichment run — taking the current customer base, appending Cogstrata's current-data attributes, and using the resulting segmentation drift analysis to size the opportunity. That analysis is typically sufficient to build an internal business case for broader data infrastructure investment, because the gap between the legacy classification view and the current-data view tends to be large enough to be commercially significant on its own terms.

The CFO framing for this conversation is simple: every month that passes with demographic data that is two or three years out of date is a month of marketing budget being partially misdirected, credit risk being partially mispriced, and retention interventions being partially misfired. The cost of better data is modest. The cost of not having it is structural and ongoing.

Run a segmentation drift analysis on your own data

We'll enrich a sample of your customer postcodes with Cogstrata's current-data attributes and show you exactly how much your existing segmentation has drifted from present reality. Free, no contract required.

Request a Free Sample